To visualize the layout, given a contiguous array x = torch.arange(12). For example, our input tensor aten has the shape (2, 3). dim dimension to insert. Parameters: input ( Tensor) the input tensor. tensors (sequence of Tensors) sequence of tensors to concatenate. A torch.rand (5, 100, 20) Original Tensor First Method B anspose (2, 1) B B.view (5, 20, 10, 10) Second Method C A.view (5, 20, 10, 10) Both methods work but the outputs are slightly different and I cannot catch the difference between them. A simple lookup table that stores embeddings of a fixed dictionary and size. stack (tensors, dim 0,, out None) Tensor ¶ Concatenates a sequence of tensors along a new dimension.

With the k-th stride being the product of all dimensions that come after the k-th axis, e.g., y.stride(0) = y.size(1) * y.size(2), y.stride(1) = y.size(0), y.stride(2) = 1. Obviously, as a highly used op, the CUDA implementation of Transpose/Permute op affects the training speed of the actual network. 4 Answers Sorted by: 19 Input In 12: aten torch.tensor ( 1, 2, 3, 4, 5, 6) In 13: aten Out 13: tensor ( 1, 2, 3, 4, 5, 6) In 14: aten.shape Out 14: torch.Size ( 2, 3) torch.view () reshapes the tensor to a different but compatible shape. Embedding (numembeddings, embeddingdim, paddingidx None, maxnorm None, normtype 2.0, scalegradbyfreq False, sparse False, weight None, freeze False, device None, dtype None) source ¶. Permute class (dims: Listint) source This module returns a view of the tensor input with its dimensions permuted. This function is equivalent to NumPy’s moveaxis function. If dims is None, the tensor will be flattened before rolling and then restored to the original shape. Image localization is an interesting application for me, as it falls right between image classification and object detection. Then see how to save and convert the model to ONNX. Elements that are shifted beyond the last position are re-introduced at the first position. Follow part 2 of this tutorial series to see how to train a classification model for object localization using CNNs and PyTorch. Torch.moveaxis(input, source, destination) → Tensor roll (input, shifts, dims None) Tensor ¶ Roll the tensor input along the given dimension(s). Additionally, it is important to consider the data type of the tensor, as this can affect the performance of the torch.moveaxis() function. When using these functions, it is important to consider the number of axes and their positions, as well as the shape of the tensor.

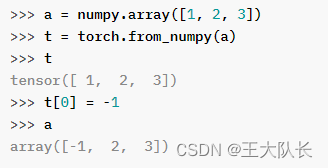

Additionally, the torch.movedim() function is an alias for the torch.moveaxis() function, and the torch.permute() function can be used to move axes to new positions while keeping the other axes in their original order. Parameters: input ( Tensor) the input tensor. how you interact with that buffer (strides and shape) changes. torch.permute torch.permute(input, dims) Tensor Returns a view of the original tensor input with its dimensions permuted. Those two are essentially the same, the underlying data storage buffer is kept the same, only the metadata i.e. permute is a fast operation on tensor metadata, it does not invoke memcopies and it did not change between 1.4 and 1.5. It can be used to solve various problems such as rearranging tensors, reshaping tensors, and more. Since permute doesnt affect the underlying memory layout, both operations are essentially equivalent. permute operation itself is not to blame.

The torch.moveaxis() function in PyTorch allows you to move axes to new positions while keeping the other axes in their original order.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed